|

I am currently a Senior Research Scientist at DeepMind. I completed my PhD at MIT under the supervision of Josh Tenenbaum in the Computational Cognitive Science group and received my B.Sc. from the University of British Columbia in physics. |

|

|

I study cognitive science, machine learning, and robotics. I am particularly interested in tool use and tool creation, which can range from every-day challenges, like wielding a broom to get something from under a couch, to grand challenges in science and engineering, like creating wind turbines or rocket ships. At both extremes, people adaptively reshape their environments in ways that expand the scope of what they are individually capable of. My research investigates which computational mechanisms support these complex behaviors in humans, and tries to endow machines with similar abilities to flexibly and appropriately solve new challenges. * denotes equal contribution. Selected projects |

|

Kelsey R. Allen*, Franziska Brändle*, Matthew Botvinick, Judith E. Fan, Samuel J. Gershman, Alison Gopnik, Thomas L. Griffiths, Joshua K. Hartshorne, Tobias U. Hauser, Mark K. Ho, Joshua R. de Leeuw, Wei Ji Ma, Kou Murayama, Jonathan D. Nelson, Bas van Opheusden, Thomas Pouncy, Janet Rafner, Iyad Rahwan, Robb B. Rutledge, Jacob Sherson, Özgür Şimşek, Hugo Spiers, Christopher Summerfield, Mirko Thalmann, Natalia Vélez, Andrew J. Watrous, Joshua B. Tenenbaum, Eric Schulz Nature Human Behavior 2024 In this perspectives article, we argue that games provide many advantages over classical paradigms, by being intuitive and fun. We discuss the potentials and pitfalls of games as research platform, and provide guidelines for how to actually use games for your experiments. |

|

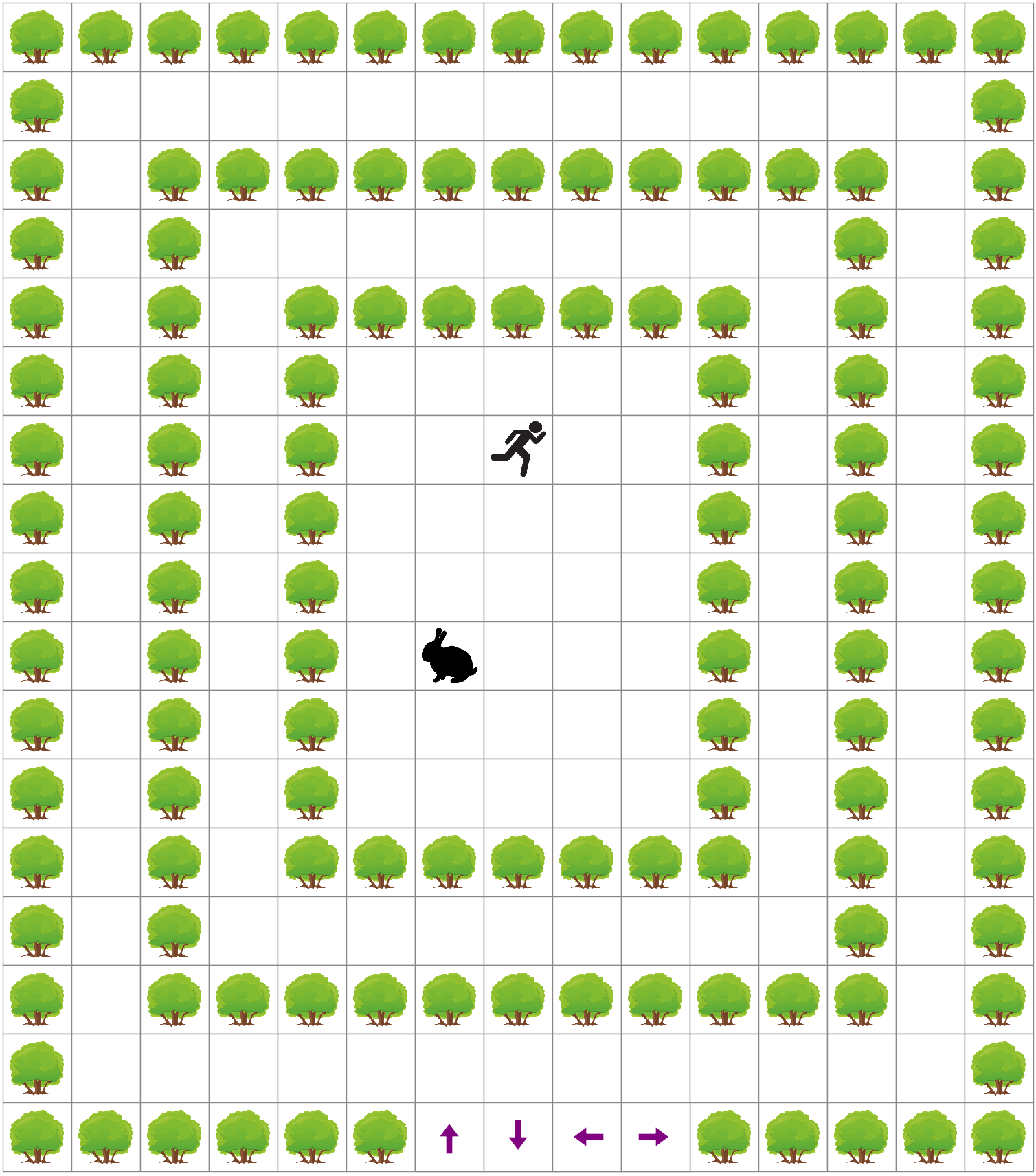

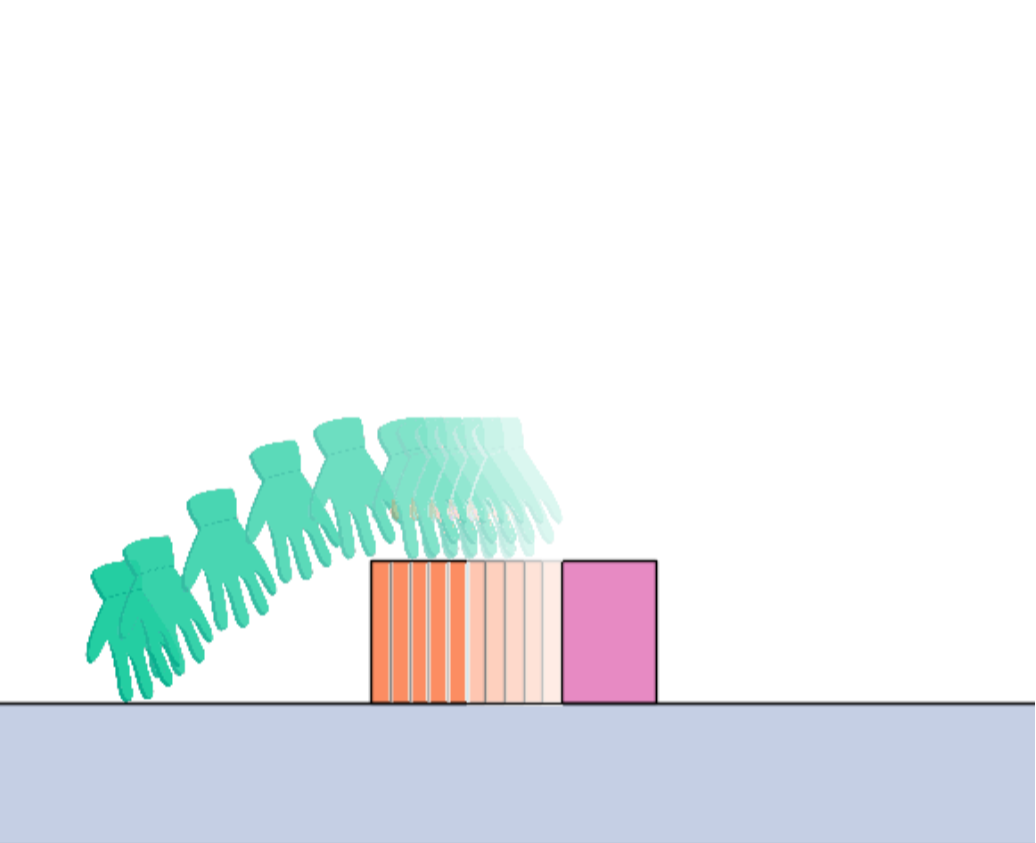

Kelsey R. Allen*, Kevin Smith*, Laura Ashleigh-Bird, Josh Tenenbaum, Tamar Makin+, Dorothie Cowie+ Psychonomic Bulletin and Review 2024 [Blog post] [Code / Data] We compare people (children and adults) born with congenital limb differences (fewer than two hands at birth) to those born without limb differences on the Virtual Tools game. We find that children and adults born with limb differences solve the game differently from those without. They take fewer attempts to solve each game level, but spend more time thinking between each attempt, relative to people born with two hands. |

|

William F. Whitney*, Tatiana Lopez-Guevara*, Tobias Pfaff, Yulia Rubanova, Thomas Kipf, Kimberly Stachenfeld, Kelsey R. Allen International Conference on Learning Representations (ICLR) 2024 [Website] We develop the first fully unsupervised, learned, 3D simulator without requiring object masks, ground truth physical state information, or point correspondences at training time. The simulator is learned end-to-end using multi-view RGB-Depth sensors, and supports 3D editing, novel view rendering, and stable physics rollouts. |

|

Kelsey Allen*, Yulia Rubanova*, Tatiana Guevara, William Whitney, Alvaro Sanchez-Gonzalez, Peter Battaglia, Tobias Pfaff International Conference on Learning Representations (ICLR) 2023 (Spotlight) [Website] We create a new graph network architecture for modeling rigid body dynamics by using insights from computer graphics for how to represent and resolve collisions. We show that our face interaction graph network (FIGNet) can dramatically improve both the accuracy and efficiency of learned simulators for complex rigid body datasets in simulation, while also being up to 2x more accurate compared to tuned analytic simulators (such as MuJoCo and PyBullet) when fit to real world data. |

|

Kelsey Allen*, Tatiana Guevara*, Kimberly Stachenfeld*, Alvaro Sanchez-Gonzalez, Peter Battaglia, Jessica Hamrick, Tobias Pfaff NeurIPS 2022 [Website] Using graph neural networks, we learn generic, differentiable physical simulators from offline, task-agnostic datasets. We take gradients through these learned simulators to optimize physical designs for a variety of tasks without further fine-tuning. This method can create 2D containers, funnels, and ramps, 3D landscapes to redirect water, and can be used to design an airfoil as performant as those found with highly engineered solvers. |

|

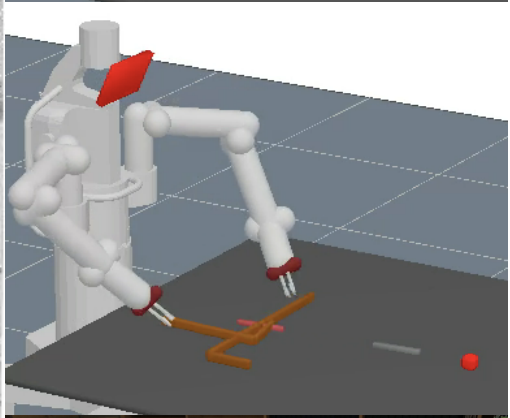

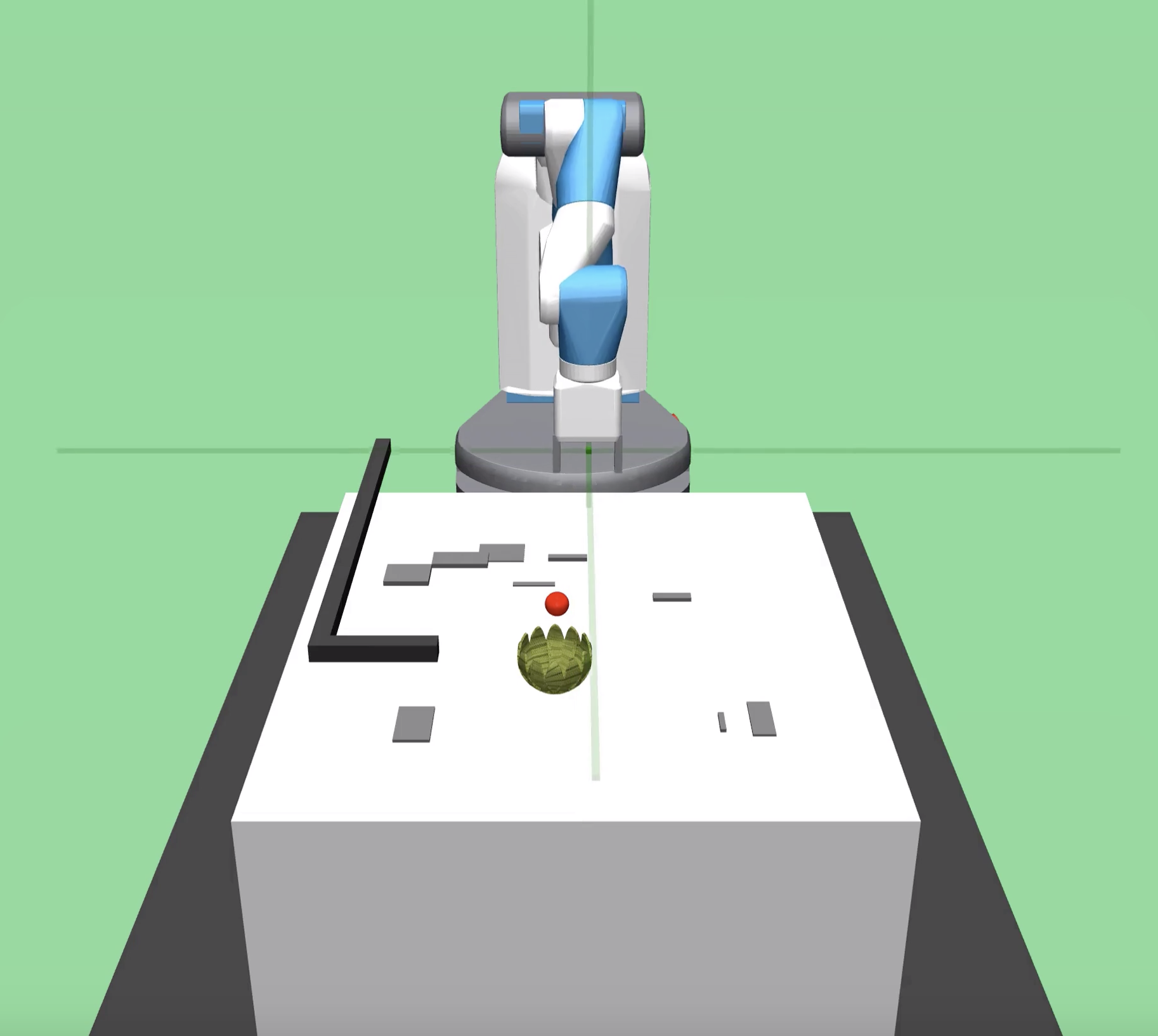

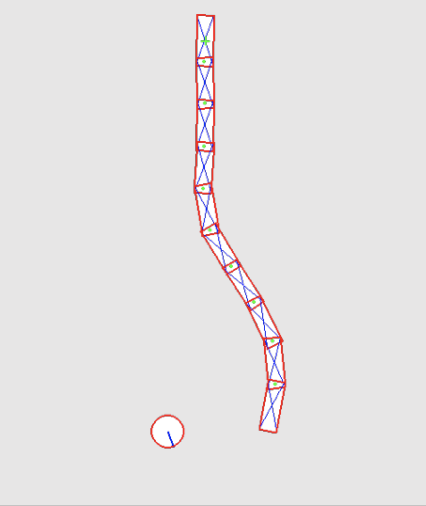

Kelsey Allen*, Kevin Smith*, Josh Tenenbaum PNAS 2020 Cognitive Science Society 2019 (Oral Presentation) ICLR SPiRL Workshop 2019 RLDM 2019 [Website] We present a new domain for testing physical problem solving skills in humans and machines. In these tool-use problems, people demonstrate both `a-ha' insights and local optimization, solving each level in 1-10 attempts. We present a model that uses model-based policy optimization with structured object-oriented priors which mimics human performance. In contrast, a deep reinforcement learning baseline is unable to generalize effectively. |

|

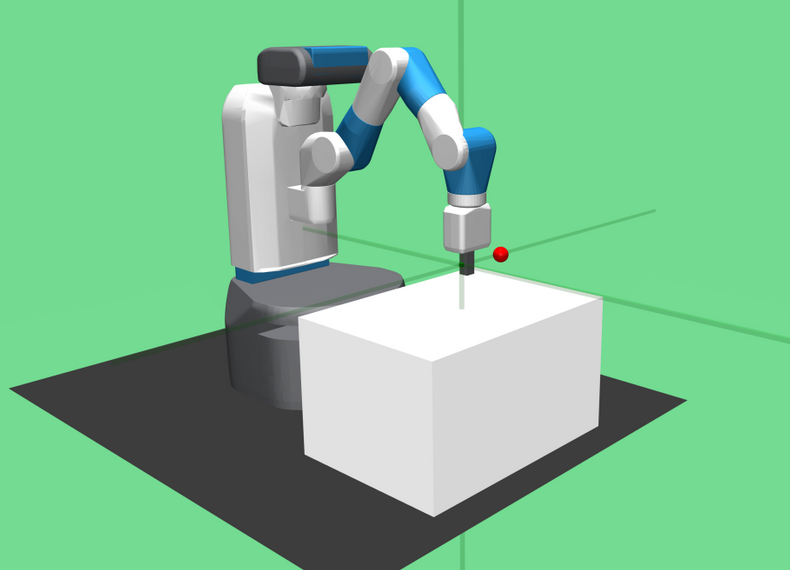

Marc Toussaint, Kelsey Allen, Kevin Smith, Joshua B Tenenbaum Robotics: Science and Systems (RSS) 2018 (Best Paper) [Code] [Video] We integrate Task And Motion Planning (TAMP) with primitives that impose stable kinematic constraints or differentiable dynamical and impulse exchange constraints at the path optimization level. This enables the approach to solve a variety of physical puzzles involving tool-use and dynamic interactions, in a similar way to humans on these same puzzles. |

|

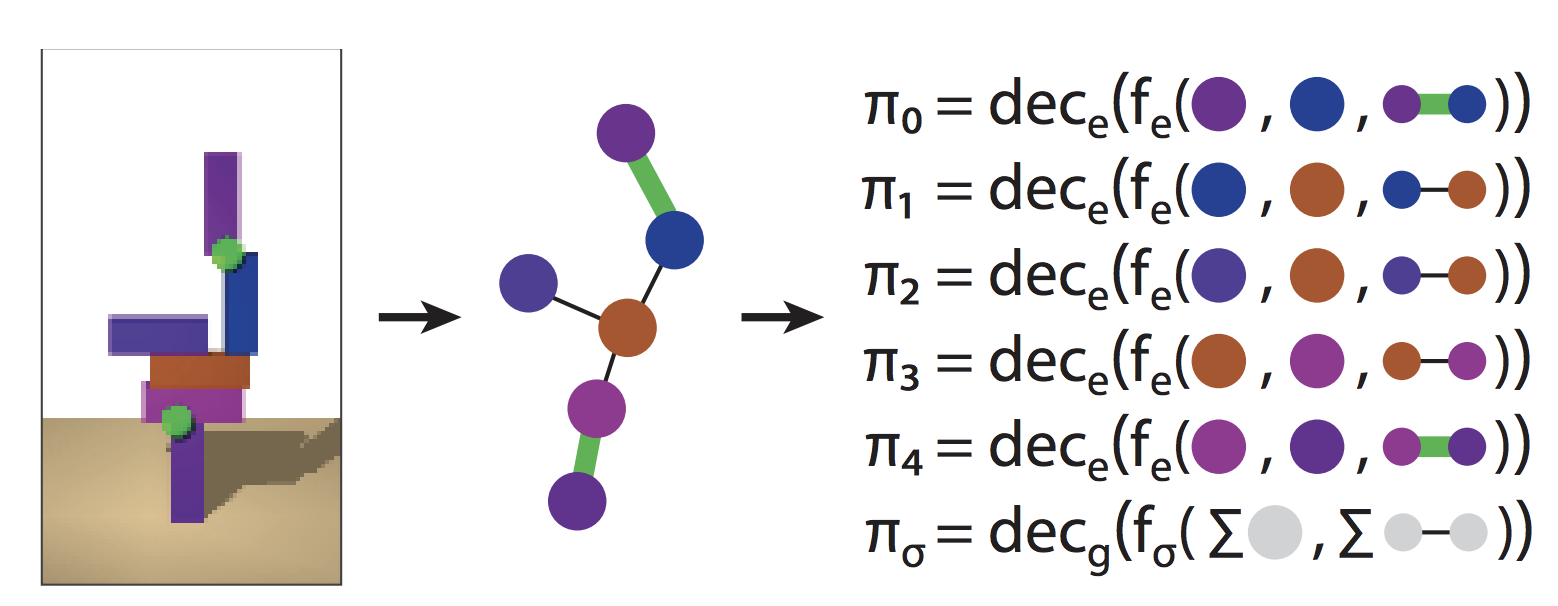

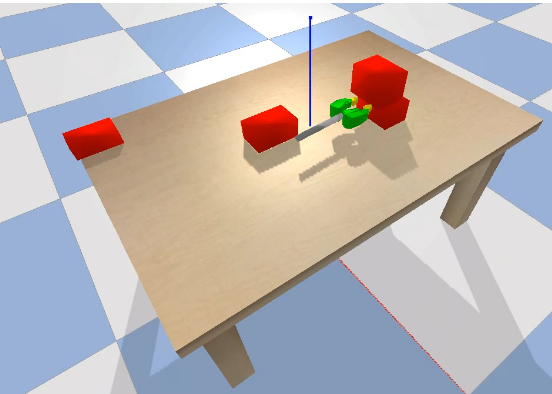

Jessica Hamrick*, Kelsey Allen*, Victor Bapst, Tina Zhu, Kevin R McKee, Joshua B Tenenbaum, Peter W Battaglia Cognitive Science Society 2018 We introduce a deep reinforcement learning agent whose policy is represented over the edges of a graph. We demonstrate that this method is able to learn how to glue blocks together to ensure a block tower is stable and generalizes across different sizes of block towers. The relational policy representation obtains super-human performance on this task. |

| Selected projects for few-shot learning |

|

Tom Silver, Kelsey Allen, Leslie Kaelbling, Josh Tenenbaum ICLR SPiRL Workshop 2019 RLDM 2019 [Website] [Code] [Video] We can learn policies from five or fewer demonstrations that generalize to dramatically different test task instances. |

|

Kelsey Allen, Evan Shelhamer*, Hanul Shin*, Josh Tenenbaum ICML 2019 We present infinite mixture prototypes (IMP), which adaptively adjust model capacity by representing classes as sets of clusters and inferring their number. IMP can be trained in fully or semi-supervised settings, and generalizes well to sub-class testing from super-class training (with 10-25% improvements over previous approaches). |

|

More AI/ML projects |

|

Kelsey Allen*, Tatiana Lopez-Guevara*, Yulia Rubanova, Kimberly Stachenfeld, Alvaro Sanchez-Gonzalez, Peter Battaglia, Tobias Pfaff Conference on Robot Learning 2022 [Website] Through a set of careful experiments, we show that graph network simulators can model discontinuities common to rigid body dynamics when impulse is instantly exchanged between two objects. Furthermore, we show that graph network simulators can even outperform tuned analytic simulators such as Drake, PyBullet and MuJoCo for real world rigid body dynamics with as few as 16 trajectories of real world data. |

|

Tom Silver*, Kelsey Allen*, Josh Tenenbaum, Leslie Kaelbling arXiv 2018 [Website] [Code] [Video] We present a simple method for learning a "residual" correction to a policy to improve nondifferentiable policies using model-free deep reinforcement learning. |

|

Victoria Xia*, Zi Wang*, Kelsey Allen, Tom Silver, Leslie Kaelbling ICLR 2019 We present a method for describing and learning transition models in complex uncertain domains using relational rules. |

|

João Loula, Tom Silver, Kelsey Allen, Josh Tenenbuam ICML MBRL Workshop 2019 We present a model that learns mode constraints from expert demonstrations. We show that it is data efficient, and that it learns interpretable representations that it can leverage to effectively plan in out-of-distribution environments. |

|

Filipe de Avila Belbute-Peres, Kevin Smith, Kelsey Allen, Josh Tenenbaum, J. Zico Kolter NeurIPS 2018 (Spotlight) [Code] We develop a differentiable physics engine in PyTorch which can be incorporated into standard end-to-end pipelines for model-based learning and control. |

|

Peter W Battaglia, Jessica B Hamrick, Victor Bapst, Alvaro Sanchez-Gonzalez, Vinicius Zambaldi, Mateusz Malinowski, Andrea Tacchetti, David Raposo, Adam Santoro, Ryan Faulkner, Caglar Gulcehre, Francis Song, Andrew Ballard, Justin Gilmer, George Dahl, Ashish Vaswani, Kelsey Allen, Charles Nash, Victoria Langston, Chris Dyer, Nicolas Heess, Daan Wierstra, Pushmeet Kohli, Matt Botvinick, Oriol Vinyals, Yujia Li, Razvan Pascanu arXiv 2018 [Code] [Tutorial Video] We summarize and provide insights on a large body of literature on Graph Neural Networks (GNNs). |

| More cognitive science/neuroscience projects |

|

João Loula, Tom Silver, Kelsey Allen, Josh Tenenbaum Cognitive Science Society 2019 We present a model that starts out with a language of low-level physical constraints and, by observing expert demonstrations, builds up a library of high-level concepts that afford planning and action understanding. |

|

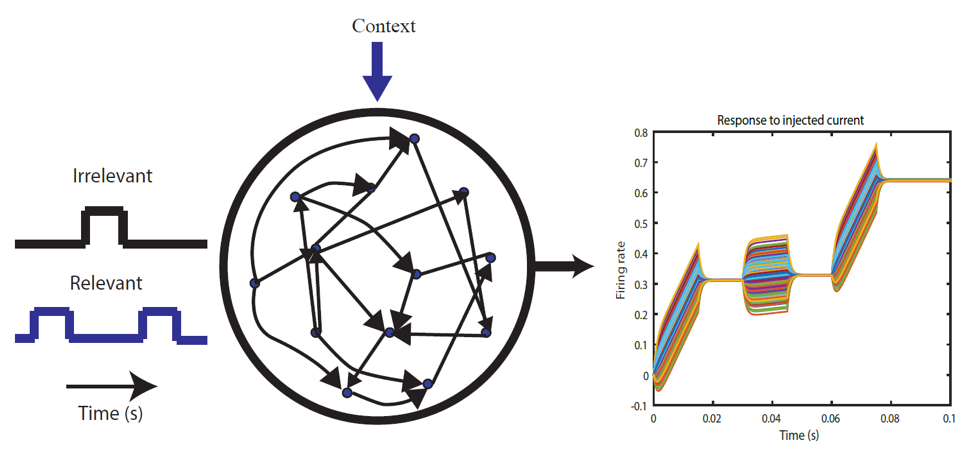

Jonathan Gill, Kelsey Allen, Alexander Williams, Mark Goldman Cosyne 2019 We present a simple method for achieving context-dependent filtering of incoming signals in biologically plausible neural networks. |

|

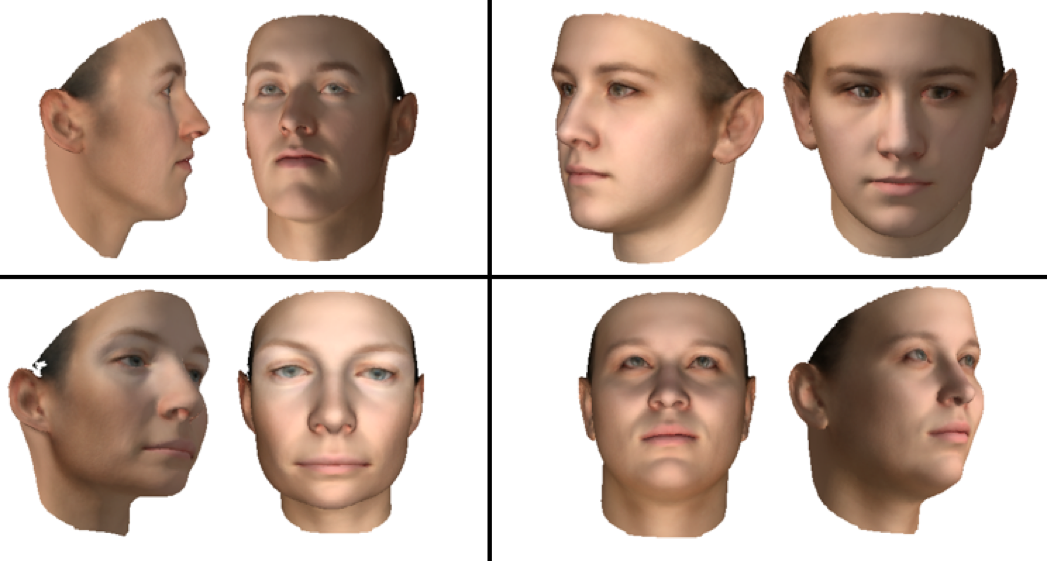

Kelsey Allen, Ilker Yildirim, Joshua B Tenenbaum Cognitive Science Society 2016 (Oral Presentation) We present a framework for explaining differences between familiar and unfamiliar face processing which combines feedforward neural networks with non-parametric generative models. |

|

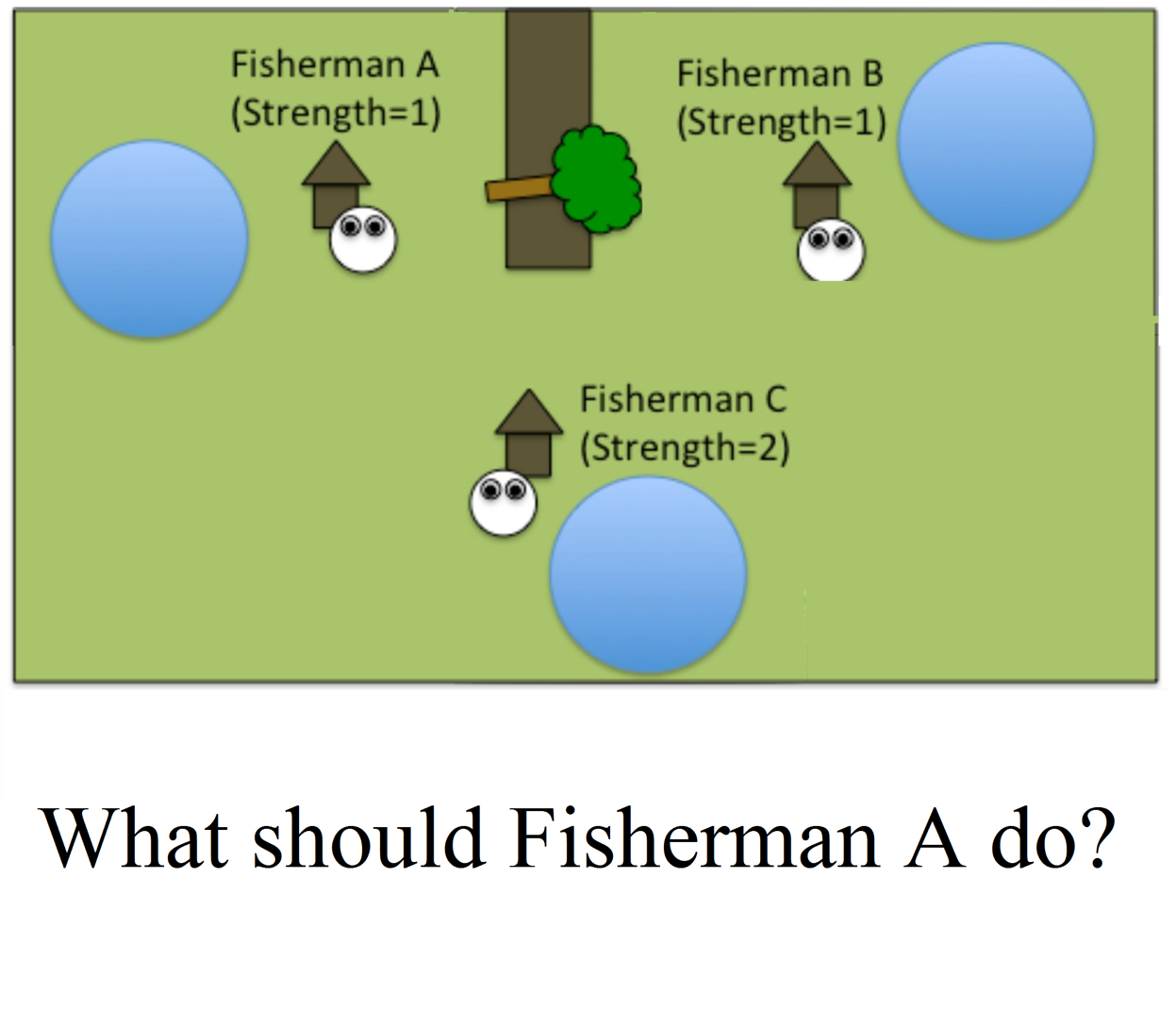

Kelsey Allen, Julian Jara-Ettinger, Tobias Gerstenberg, Max Kleiman-Weiner, Joshua B Tenenbaum Cognitive Science Society 2015 We present a coordination game in which three agents must use their knowledge of each others' abilities in order to determine the best action to take. We show that a model which acts to maximize utility under recursive theory of mind, combined with a measure of outcome, best predicts human decisions. |

| From my undergraduate days (physics, ants and language) |

|

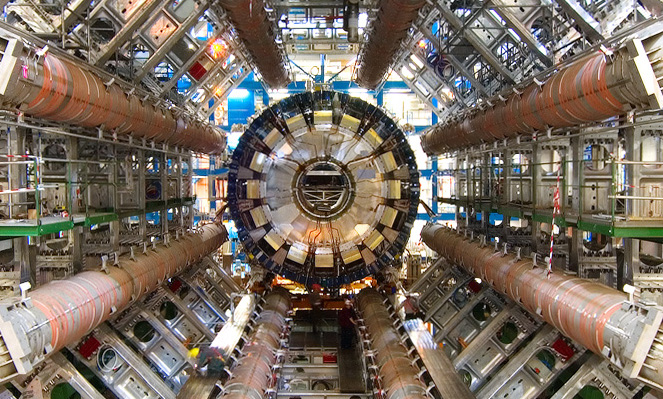

ATLAS Collaboration Physical Review D 2014 I contributed analyses to determine new limits for particles that might emerge from minimal Z' models. |

|

Evlyn Pless, Jovel Queirolo, Noa Pinter-Wollman, Sam Crow, Kelsey Allen, Maya B Mathur, Deborah M Gordon PLoS One 2015 We analyzed the foraging patterns of red harvester ants and found that ants which had greater numbers of antennal contacts with returning foragers were more likely to leave the nest to forage. |

|

Kelsey Allen, Giuseppe Carenini, Raymond Ng EMNLP 2014 We developed a new set of features based on the automatically generated rhetorical structure of online forum conversations, and show that these features are helpful for detecting disagreement. |

|

Template from here. |